Connect

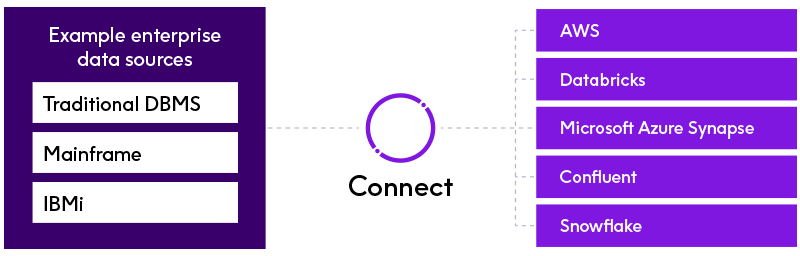

Integrate data seamlessly from legacy systems into next-gen cloud and data platforms with one solution

Seamless data access and collection

Connect helps you take control of your data from mainframe to cloud. Integrate data through batch and real-time ingestion for advanced analytics, comprehensive machine learning and seamless data migration.

Connect leverages the expertise Precisely has built over decades as a leader in mainframe sort and IBM i data availability and security to lead the industry in accessing and integrating complex data. Access to all your enterprise data for the most critical business projects is ensured by support for a wide range of sources and targets for all your ETL and change data capture (CDC) needs.

Sources and targets include:

Benefits

Simplify integration

Take a one solution approach to integrate, prepare, load, cleanse, transform and stream data across clustered or cloud frameworks

Data access

Integrate all enterprise data, from mainframe and IBM i to cloud, while keeping secure integrations

Real-time replication

Stream changes to data instantly for use in downstream applications, data lakes and warehouses

Future-proof

Quickly and easily add new sources and targets. Deploy in new environments with no redevelopment required

Higher ROI

Extend the value of your mission-critical systems – everything from open systems databases to mainframe and IBM i to modern cloud platforms

Mainframe data

VSAM, IMS, COBOL copybooks, mainframe fixed and sequential files

RDBMS

Oracle, SQL Server, Db2 for z/OS, Db2 for i, Db2 for LUW, MySQL, Sybase, PostgreSQL

Semi-structured data

JSON, XML

Enterprise data warehouses

Teradata, IBM Netezza, Vertica, Greenplum

Cloud platforms

Amazon AWS, Microsoft Azure, Google Cloud Platform

Cloud analytics

Big data

Hadoop, Hive, Impala, Apache Avro, Apache Parquet, Apache Spark

Streaming platforms

Apache Kafka

Flat files

Fixed length, variable length, delimited

Unrivaled scalability and efficiency for data replication

Replicate

Real-time access to data changes as they happen

Grow

Add next-gen targets, no coding required

Simplify

One solution for data replication and ETL

Access

All enterprise data sources

Mainframe access and integration

Mainframe application data enhances all types of strategic projects – from advanced analytics to machine learning and everything in between. With all that value, this data can’t be left out of your broader data integration strategy.

That said, it can be difficult or impossible for some businesses to access, integrate, or migrate mainframe data.

For most organizations, mainframe data integration success depends on specialized skills that understand legacy data. But these skills are in short supply, and that’s where data integration solutions are key to bringing mainframe data into an intuitive interface that runs on any computing environment. That way, you’re set up for success with your existing resources.

With high-performance solutions built on Precisely Connect, you directly access, understand, and transform complex mainframe data. Connect ensures that mainframe data reaches your platform of choice for advanced analytics, fraud detection, machine learning, and more.

Learn more about the mainframe access and integration capabilities of Connect.

The Precisely and AWS partnership

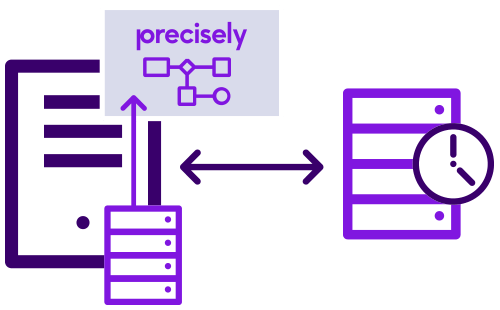

Precisely and Amazon Web Services (AWS) are collaborating to integrate Precisely Connect into the AWS Mainframe Modernization service, enabling customers to replicate mainframe data onto the AWS cloud platform in real time. By accelerating access to mainframe data on AWS, businesses can get mainframe migration projects done on time and on budget, all while extending the value of mission-critical, and high investment, mainframe systems.

Real-time data replication with CDC

Living in a world of delayed data means making business decisions with old information. You need data replication solutions that capture and reflect data changes to your analytics and reporting layer as they happen. Connect is a highly versatile solution that helps you build data pipelines that share changes to application data as it occurs.

Connect’s real-time replication ensures that databases are in-sync for reporting, analytics, and data warehousing. You can replicate changes as they happen across hierarchical data stores (IMS, VSAM), relational databases, streaming frameworks, and the cloud. Support a variety of architectures and topologies. Connect’s resilient data delivery guarantees that you never experience interruptions in your data flow.

With Connect, worry about your business and not your systems. Get the changes you need in real-time without overloading networks or affecting performance.

To learn how Connect can help you build real-time streaming data pipelines, read our eBook: Streaming Legacy Data for Real-Time Insights

Precisely Connect

Seamlessly integrate data from legacy systems into next-gen cloud and data platforms

Optimize environments, and maximize performance

You need data integration solutions that help accommodate growing volumes of data, users, and unpredictable peak usage demands. For that, you need predictable performance and scalability. Connect offers both.

Connect is the only solution with a self-tuning engine that dynamically selects the most efficient algorithms based on data structures and systems attributes at run-time. Know that you’re getting the best performance possible, regardless of if a job is on-premises or in the cloud. For ETL processes, Connect users have experienced 10X faster performance and saved hundreds of hours of development.

Connect delivers unrivaled scalability and efficiency for data replication. Connect minimizes network impact by detecting changes via database and system logs and replicating them downstream. Connect does this all without affecting the applications that still run on those systems.

Future-proof data transformations

You need solutions that can help you adapt your infrastructure to changing business and technology needs. Connect helps future-proof your data integration approach for both skills and unknown technologies. Connect makes it easy to integrate new sources and targets with a design once, deploy anywhere approach. You can visually design workflows for on-premises or cloud deployments to Spark, MapReduce, Linux, UNIX, or Windows. You never need to rewrite jobs or invest in new, specialized skills to scale data integration needs.

Connect ensures you do not have to worry about the platform you run on or location where your valuable data resides. Easily import data for hundreds or even thousands of tables living in your databases without wasting manual resources. With Connect, point, click and onboard entire schemas from a database in minutes.

“Integrating Precisely Connect with our Teradata environment was by far the best IT investment for United Rentals in many years.”

Jim Malin

United Rentals